What is document AI, and how does it work? This primer on all things artificial intelligence and document-based data was written by:

Dr. Chris Dearner,

Grooper Product Manager

14 Truths About How Document AI Works to Get Data:

- What is artificial intelligence?

- What is machine learning?

- What is supervised machine learning?

- What is unsupervised machine learning?

- What is broad vs. narrow AI?

- What is natural language processing?

- What is deep learning?

- What is a neural network?

- How do neural nets work?

- What is Tensorflow?

- What is a GAN?

- What is TF-IDF?

- What is computer vision?

- The brutal truth about AI

Plus: Document AI FAQs

#1 - First, What is Artificial Intelligence?

AI, from Wikipedia:

In computer science, artificial intelligence (AI), sometimes called machine intelligence, is intelligence demonstrated by machines, in contrast to the natural intelligence displayed by humans.

Leading AI textbooks define the field as the study of "intelligent agents": any device that perceives its environment and takes actions that maximize its chance of successfully achieving its goals.

Leading AI textbooks define the field as the study of "intelligent agents": any device that perceives its environment and takes actions that maximize its chance of successfully achieving its goals.

Colloquially, the term "artificial intelligence" is often used to describe machines (or computers) that mimic "cognitive" functions that humans associate with the human mind, such as "learning" and "problem solving".

As machines become increasingly capable, tasks considered to require "intelligence" are often removed from the definition of AI, a phenomenon known as the AI effect.

A quip in Tesler's Theorem says "AI is whatever hasn't been done yet." For instance, optical character recognition is frequently excluded from things considered to be AI, having become a routine technology.

Modern machine capabilities generally classified as AI include successfully understanding human speech, competing at the highest level in strategic game systems (such as chess and Go), autonomously operating cars, intelligent routing in content delivery networks, and military simulations.

Two Sides of Artificial Intelligence

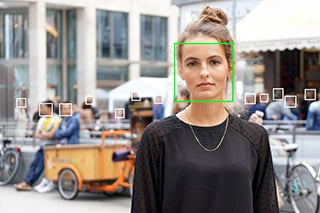

AI is a loose term, but mostly means using computers to solve problems that are generally understood to require “intelligent” judgement, such as recognizing faces, driving cars, classifying documents, making medical decisions, among others.

AI is a loose term, but mostly means using computers to solve problems that are generally understood to require “intelligent” judgement, such as recognizing faces, driving cars, classifying documents, making medical decisions, among others.

Many, if not most, AI tasks in the real world involve either:

- Classification of inputs into categories or

- Generation of content similar to a training set.

When you read about AI being used to, for example, screen resumes, predict the risk of recidivism, or diagnose cancer, it falls under the former category.

If you see an AI that generates human-like text, it’s the latter category.

BIG TIP: When people talk about “AI” in the news or in the industry, they usually mean something as general as “using computers to do something hard.”

This may involve machine learning; it may not. They may also be thinking of using neural networks or other sophisticated machine learning systems to solve problems without much human guidance; more on this later.

#2 - What is Machine Learning?

Machine Learning, from Wikipedia:

Machine learning (ML) is the scientific study of algorithms and statistical models that computer systems use to perform a specific task without using explicit instructions, relying on patterns and inference instead.

Machine learning (ML) is the scientific study of algorithms and statistical models that computer systems use to perform a specific task without using explicit instructions, relying on patterns and inference instead.

It is seen as a subset of artificial intelligence. Machine learning algorithms build a mathematical model based on sample data, known as "training data", in order to make predictions or decisions without being explicitly programmed to perform the task.

Machine learning algorithms are used in a wide variety of applications, such as email filtering and computer vision, where it is difficult or infeasible to develop a conventional algorithm for effectively performing the task.

Machine Learning is a way to build systems that display artificial intelligence (read: can solve hard problems).

Generally speaking, it is a strategy that builds systems that:

- Are not specifically programmed to perform their task until they are given training data

- Get better (to a point) at performing their task when exposed to more data or more inputs

Machine Learning in Document AI

Machine learning algorithms are generally divided into two types: supervised and unsupervised.

Intelligent document processing (IDP), classification, separation, and extraction systems all incorporate Machine Learning algorithms.

Generally, when people are concerned with ML, chances are they’re really concerned with how much work they’re going to have to do to get Grooper to process their data. More on that below, following the section on Unsupervised ML.

#3 - What is Supervised Machine Learning?

Supervised ML, from Oracle’s Data Science Blog:

“Supervised learning is so named because the data scientist acts as a guide to teach the algorithm what conclusions it should come up with. It’s similar to the way a child might learn arithmetic from a teacher.

"Supervised learning requires that the algorithm’s possible outputs are already known and that the data used to train the algorithm is already labeled with correct answers.

"For example, a classification algorithm will learn to identify animals after being trained on a dataset of images that are properly labeled with the species of the animal and some identifying characteristics.”

How Supervised Machine Learning Applies to Document AI

This is how the central machine learning algorithm in document AI works: the Grooper Architect determines a discrete set of categories, and gives an initial set of properly labeled training for the algorithm to begin making predictions.

Grooper is designed this way because human-supervised ML works better, especially for the sorts of complicated problems that are our bread and butter.

#4 - What is Unsupervised Machine Learning?

Unsupervised ML, from Oracle’s Data Science Blog:

“On the other hand, unsupervised machine learning is more closely aligned with what some call true artificial intelligence — the idea that a computer can learn to identify complex processes and patterns without a human to provide guidance along the way …

“On the other hand, unsupervised machine learning is more closely aligned with what some call true artificial intelligence — the idea that a computer can learn to identify complex processes and patterns without a human to provide guidance along the way …

"While a supervised classification algorithm learns to ascribe inputted labels to images of animals, its unsupervised counterpart will look at inherent similarities between the images and separate them into groups accordingly, assigning its own new label to each group.”

Why Unsupervised Machine Learning Does Not Apply to Document AI

Grooper document AI does not use unsupervised machine learning, because it:

- Requires very large data sets to work at all

- Doesn’t work very well

- Will the AI get better at classifying (or extracting) on its own?

- Will AI save me from having to understand my documents or data?

The answer to both of these questions is no.

Document AI doesn’t get better on its own, because human design of intelligent document processing systems achieves better outcomes than unsupervised ML in almost every case.

BIG TIP: Nothing – not even Amazon, or Azure, or Watson – will save you from having to understand your own documents and data. (Even Google Cloud's DocAI uses relies on human review to extract information from paperwork).

If someone tells you otherwise, they’re lying; and if someone you’re talking to believes that Grooper (or any other system) will save them from their own document or data problems, they’re setting themselves up for failure.

Some Other General AI Terms

#5 - What is Broad vs Narrow AI?

Broad vs Narrow AI, from Forbes:

“The general AI ecosystem classifies these AI efforts into two major buckets: weak (narrow) AI that is focused on one particular problem or task domain, and strong (general) AI that focuses on building intelligence that can handle any task or problem in any domain.

“The general AI ecosystem classifies these AI efforts into two major buckets: weak (narrow) AI that is focused on one particular problem or task domain, and strong (general) AI that focuses on building intelligence that can handle any task or problem in any domain.

"From the perspectives of researchers, the more an AI system approaches the abilities of a human, with all the intelligence, emotion, and broad applicability of knowledge of humans, the “stronger” that AI is.

"On the other hand the more narrow in scope, specific to a particular application the AI system is, the weaker it is in comparison.”

Weak (or Narrow) AI vs. Broad (or Strong, or General) AI is a pretty easy distinction:

- Narrow AI solves one or a small number of related problems

- Broad AI solves a wide range of them

Narrow AI exists in a number of different domains. But Broad AI?

Broad AI doesn’t exist. Broad AI will never exist. Broad AI was 10-20 years away in 1980. Broad AI was 10-20 years away in 2000. Broad AI remains 10-20 years away in 2020.

See the pattern? More about this later.

#6 - What is Natural Language Processing?

NLP, from Wikipedia:

Natural language processing (NLP) is a subfield of linguistics, computer science, information engineering, and artificial intelligence concerned with the interactions between computers and human (natural) languages, in particular how to program computers to process and analyze large amounts of natural language data.

NLP's Relationship With Document AI

Natural Language Processing really just refers to using computers to process human language. Most Grooper tools are NLP tools: extractors, regular expressions, OCR, TF/IDF, all involve the processing of natural language.

Porter stemming is a form of NLP we use, and Grooper also integrates with Azure Translate to provide translation.

Other advanced NLP techniques, such as sentiment analysis, part-of-speech tagging, named entity tagging, etc. are theoretically possible with Grooper but not included out-of-the-box.

#7 - What is Deep Learning?

Deep learning, from Wikipedia:

Deep learning (also known as deep structured learning or hierarchical learning) is part of a broader family of machine learning methods based on artificial neural networks. Learning can be supervised, semi-supervised or unsupervised.

Why Deep Learning Technology Isn't Great with Getting Document Data

Generally, deep learning is synonymous with neural-network-based AI. Deep learning models typically involve multiple layers, each of which extracts different “features” from the data.

But unless you’re talking to an AI researcher, people generally just mean big, complicated AI systems (think Watson or Tensorflow) when they talk about “deep learning” as a concept.

But unless you’re talking to an AI researcher, people generally just mean big, complicated AI systems (think Watson or Tensorflow) when they talk about “deep learning” as a concept.

They may be thinking of neural networks specifically, either with or without a great understanding of what these are. More on neural networks in the next section.

Grooper doesn't use deep learning, because it doesn’t work very well for the sorts of problems we solve.

How is Document AI Intelligent?

Keep in mind that since “AI” is an incredibly general term – it can mean as little as “doing difficult things with computers” – it’s impossible to mention all of the methods and algorithms that might be considered AI.

Luckily for us, when people talk about AI, they are usually thinking of a relatively small number of things.

The (Sort of) Bleeding Edge: Neural Networks

#8 - What is a Neural Network?

(Artificial) Neural Network, from Wikipedia:

Artificial neural networks (ANN) or connectionist systems are computing systems that are inspired by, but not identical to, biological neural networks that constitute animal brains. Such systems "learn" to perform tasks by considering examples, generally without being programmed with task-specific rules.

For example, in image recognition, they might learn to identify images that contain cats by analyzing example images that have been manually labeled as "cat" or "no cat" and using the results to identify cats in other images. They do this without any prior knowledge of cats, for example, that they have fur, tails, whiskers and cat-like faces.

For example, in image recognition, they might learn to identify images that contain cats by analyzing example images that have been manually labeled as "cat" or "no cat" and using the results to identify cats in other images. They do this without any prior knowledge of cats, for example, that they have fur, tails, whiskers and cat-like faces.

Instead, they automatically generate identifying characteristics from the examples that they process.

Neural nets are important not only because they are the most important type of AI in development today, but because the philosophy underpinning them is the core philosophy of most AI research.

What I’d like to point to is two things:

- Neural networks aim to generate human-like decisions by imitating a highly simplified model of what we currently understand the brain to be structured like

- The way in which neural networks match “inputs” (a picture of a cat) to “outputs” (the label “cat”) is unpredictable and, in a fundamental way, unintelligible (that is – we can’t understand it)

What the definition above includes is that they’re able to make these decisions without any prior knowledge of cats; what it omits is that the decision process of neural networks, once developed, may bear little or no relationship to human decisioning.

Which gets us to those “philosophical underpinnings” I mentioned earlier – using neural nets to do AI (solve complicated problems) assumes – or asserts - that by imitating a simplified model of the brain we’ll get not only as-good-as-human judgments, but judgments that are ultimately better than the ones humans would make, for reasons we ultimately won’t understand

Remember, it’s not the whiskers and the fur that lets a neural network know it’s looking at a picture of a cat. What is it? Who knows... I’ll leave aside the inherent contradiction here (that a simplified model of the brain will generate better results than a human can) and leave it at that for now. We’ll talk more about the limitations of Neural Net-based document AI later.

#9 - How Do Neural Nets Work?

.png?width=300&name=Neural-Network-Graphic-2(002).png) A neural network, in the most general sense, consists of three layers: an input layer, a hidden layer, and an output layer.

A neural network, in the most general sense, consists of three layers: an input layer, a hidden layer, and an output layer.

The hidden layer does the bulk of the information processing, and consists of nodes connected to each other in a weighted manner.

When the network processes input, these weighted connections determine how information flows through it and, ultimately, what decision it comes to (or what output it produces).

Neural networks have a mechanism to alter the weights of those connections based on learning. The complexity – and power – of neural networks comes from the number of nodes and layers, but the ways in which the hidden layer makes decisions are difficult to inspect – hence the name.

#10 - What is Tensorflow?

Tensorflow, from tensorflow.org:

TensorFlow is an end-to-end open source platform for machine learning. It has a comprehensive, flexible ecosystem of tools, libraries and community resources that lets researchers push the state-of-the-art in ML and developers easily build and deploy ML powered applications.

Tensorflow is Google’s open-source library for building neural networks. Tensorflow makes it relatively easy for someone with a basic understanding of Python to build and train neural networks on home-desktop grade hardware.

It is used widely in both business and academic applications. You’re unlikely to hear anyone reference this directly (you might!) but it’s an important technology to be aware of.

#11 - What is a Gan?

From tensorflow.org:

Generative Adversarial Networks (GANs) are one of the most interesting ideas in computer science today. Two models are trained simultaneously by an adversarial process.

A generator ("the artist") learns to create images that look real, while a discriminator ("the art critic") learns to tell real images apart from fakes.

GANs are essentially two neural networks pitted against each other. They can be trained to generate strikingly realistic images – if you’ve seen pictures of AI-generated faces, those were produced by a GAN.

GANs are essentially two neural networks pitted against each other. They can be trained to generate strikingly realistic images – if you’ve seen pictures of AI-generated faces, those were produced by a GAN.

They are very, very, cool, but (as far as I’m aware) their practical application is still an open question. Again, you’re unlikely to run into someone asking about GANs in the wild, but they’re another important technology to be aware of.

Document AI Without Neural Nets

So, now that we’ve talked about neural nets (and, by association, deep learning), we can talk about the AI/ML algorithms that Grooper uses, and why these tend to work better for us. We’ll get into the limitations of neural-net based AI and unsupervised ML in the next section, too.

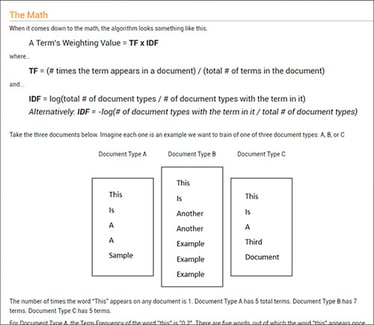

What is TF-IDF?

TF-IDF, from Wikipedia:

In information retrieval, tf–idf or TF-IDF, short for term frequency–inverse document frequency, is a numerical statistic that is intended to reflect how important a word is to a document in a collection or corpus. It is often used as a weighting factor in searches of information retrieval, text mining, and user modeling.

The TF-IDF value increases proportionally to the number of times a word appears in the document and is offset by the number of documents in the corpus that contain the word, which helps to adjust for the fact that some words appear more frequently in general.

The TF-IDF value increases proportionally to the number of times a word appears in the document and is offset by the number of documents in the corpus that contain the word, which helps to adjust for the fact that some words appear more frequently in general.

TF–IDF is one of the most popular term-weighting schemes today; 83% of text-based recommender systems in digital libraries use TF–IDF.

Why TF-IDF Works Quite Well in Documents

We’re going to spend a minute talking about TF-IDF, because it’s the core AI (and only ML) algorithm that Grooper uses. TF-IDF stands for “term frequency/inverse document frequency,” and classifies documents (or “collections”) by comparing how frequently words are seen on the target document types versus how frequently they occur in the sample set as a whole.

We’re going to spend a minute talking about TF-IDF, because it’s the core AI (and only ML) algorithm that Grooper uses. TF-IDF stands for “term frequency/inverse document frequency,” and classifies documents (or “collections”) by comparing how frequently words are seen on the target document types versus how frequently they occur in the sample set as a whole.

Without getting too much in the weeds, TF-IDF works by identifying words (or inputs) that are unique (or more common) in a particular type of document compared to the document set overall. It’s a deceptively simple way of classifying documents, and it generally does so in a similar way to how humans do it: by looking at the individual words on the document.

Now, it’s worth noting that TF-IDF doesn’t “read” or “understand” the words (virtually no ML algorithms do), and it’s not sensitive to where in the documents the words occur. It’s just counting words (or features, if your extractor isn’t feeding it words) to help automate data capture at scale.

Grooper does a few things that make TF-IDF work better than other ML algorithms.

First, and most importantly, it lets you feed TF-IDF anything.

Do you want the algorithm to look at words? Two word pairs? Names of mammals? The presence of dates or names? The words “phantom” and “empire?”

Good. Great. Fantastic, even. You can write extractors to feed that into the algorithm. This is crucial, because it lets a human determine which types of features are most likely to matter for a given document – and there are some types of documents (think a Mineral Ownership Report) where the content of the words won’t ever tell you what the document is.

Our TF-IDF implementation also lets architects inspect the feature weightings, which lets them directly and completely understand how it’s making decisions. This is not possible using Neural nets! And generally not available in other systems, even those implementing TF-IDF or similar algorithms.

TF-IDF also requires a relatively small number of training samples (generally less than 100, although not always so) to reach optimum decision making, which makes it much quicker to train than most machine learning algorithms.

How TF-IDF Works in Grooper Document AI

TF-IDF can be used in data extraction, separation, and classification within Grooper. In separation and classification, it lets you work with unstructured documents.

In extraction, it lets you pick out the correct instance of a value type on a page (e.g. tell date of birth from date of service) by looking at features surrounding the detected value.

If people ask about ML in Grooper, ours is:

- Faster

- Easier to train

- Provides more insight and

- More control over the classification process

This requires more touch (initially) than unsupervised ML, but it gets much better results over a wide range of problems.

#13 - What is Computer Vision, and How Does It Find Data in Documents?

Grooper also implements a number of other algorithms – generally around image processing – that could be called AI algorithms.

Grooper also implements a number of other algorithms – generally around image processing – that could be called AI algorithms.

These include our line and OMR box recognition, our blob detection (used in both blob removal and deskew), our ability to remove, e.g. combs from lines, and our periodicity detection.

The Grooper unified console uses a number of Computer Vision algorithms (many developed in-house) that provide best-in-class ability to clean up documents for OCR and detect non-character-based semantic (information containing) elements on the document such as lines, boxes, etc.

#14 - The Brutal Truth About AI

Here’s a fairly representative paragraph about AI (emphasis mine):

Artificial intelligence has been conquering hard problems at a relentless pace lately.

In the past few years, an especially effective kind of artificial intelligence known as a neural network has equaled or even surpassed human beings at tasks like discovering new drugs, finding the best candidates for a job, and even driving a car.

Neural nets, whose architecture copies that of the human brain, can now—usually—tell good writing from bad, and—usually—tell you with great precision what objects are in a photograph.

Such nets are used more and more with each passing month in ubiquitous jobs like Google searches, Amazon recommendations, Facebook news feeds, and spam filtering—and in critical missions like military security, finance, scientific research, and those cars that drive themselves better than a person could.

This was written in 2015 (you can find the article here), and the claims it makes are fairly typical of what people tend to say about AI.

They are also all incorrect.

BIG TIP: AI is not equal to or better than humans at discovering new drugs, finding the best candidates for a job, and even driving a car.

Neural nets cannot generally tell you what objects are in a photograph.

AI cannot tell good writing from bad. (Astute readers will also note that neural net architecture does not copy that of the human brain).

AI Demos Set Very High Expectations

I tried to track down the source of these claims, and generally couldn’t – which is also representative of claims people make about AI.

Big, bold, elusive, and almost universally somewhere from an overstatement to an outright falsehood.

This might not sound quite right to you, though – maybe you’ve seen Azure translate at work, or even Azure image recognition. Maybe you’ve seen other demonstrations of AI that look cool and powerful, and make it seem like there’s very little AI can’t do.

If you’re in sales or marketing, or just a generally skeptical person, you can probably guess what’s going on here: AI demonstrations generally engage in a lot of expectation shaping, and generally have very sturdy guardrails set up just out of sight.

Will Document AI Ever Have General Intelligence?

If I — for example — show you an AI that can correctly categorize an in-focus, good quality picture of:

- A dog

- A cat and

- A house

Does that really prove that it can “tell you what objects are in a photograph?”

If I can build an AI-based program to win Jeopardy, or to beat human grand masters at Chess, does that mean it’s going to be good at other things?

Here’s the central trick that discourse around AI plays on us: by claiming to be based on the structure of the brain, the implication is that AI-based “intelligence” should, will, or can work like human intelligence.

Here’s the central trick that discourse around AI plays on us: by claiming to be based on the structure of the brain, the implication is that AI-based “intelligence” should, will, or can work like human intelligence.

Remember where the author says that neural networks “copy” the structure of the human brain? Ken Jennings is a pretty smart guy, so he’s good at a lot of things that aren’t Jeopardy. My co-worker Randall can identify dogs in pictures, so he can also identify a lot of other things.

But AI doesn’t – and won’t ever – work like that. There is absolutely zero evidence that a trained neural network – or any other AI system – has or can have anything like generalized intelligence. Absolutely none.

The only reason people expect that behavior from AI is because they

- Don’t understand the technology

- Don’t understand the assumptions (what I previously called “philosophical underpinnings”) behind the technology

- Have a very, very impoverished understanding of human cognition

Expecting neural networks to be able to solve problems they’ve never seen before because you can train them to do lots of different things is a little bit like thinking because you can build a lot of different jigs to hold a router that, at some point, it will be able to mill things on its own.

I promise you - it will not.

In the Future, What Document AI Tech Will Help Us With Our Problems?

What this means for us – and for our industry – is that AI and ML won’t save us. Document AI in your environment won’t:

- Save you from having to understand your own documents

- Save you from having to solve hard problems

- Simply turn documents into data without any effort

- Enrich data or parse forms on complicated invoices or receipts without human review.

AI-based tools can be incredibly helpful, but they’re only ever going to be part of a full solution. Intelligent document processing isn’t a narrow problem, and it’s never going to be. That’s why AI won’t save you.

AI-based tools can be incredibly helpful, but they’re only ever going to be part of a full solution. Intelligent document processing isn’t a narrow problem, and it’s never going to be. That’s why AI won’t save you.

So, having said all that, I am going to say one other thing – document AI is, in fact, the future. It is already doing a great job at transforming documents into structured data, boosting the speed of decision-making and unlocking business value.

Remember how we defined AI as “using computers to do something hard?” That won’t stop happening.

But it won’t happen in the way people expect it — or with the tools people now think are revolutionary.

Document AI FAQs:

What is Document AI?

Document AI is a technology that uses artificial intelligence tools to automate the processing of documents in order to extract all important data. Document AI solutions were developed based on decades of document research to provide basic document retrieval and analyzation abilities of common enterprise documents such as invoices, simple forms, statements, and receipts.

What are the Benefits of Document AI?

Using artificial intelligence to get data and knowledge out of documents brings many benefits. These include, but are not limited to:

- Improved Decision Making: Enterprises make better and faster business decisions through unlocking data and making it accessible to many users and business intelligence applications.

- Enhanced Operational Efficiency: Particularly valuable knowledge can come from extracting structured data from unstructured documents and unlocking their insights.

- Ensured Compliance: Instead of using manual entry work that is rife with errors, automated document processing keeps data accurate and compliant. It also reduces guesswork as it can automate data validation (or provide human-in-the loop validation) to streamline compliance workflow.

- Happier Customers: Leveraging more data increases insights into customer and client data. Cost and time efficiencies can be passed onto customers, to meet and exceed their expectations.

- Other benefits related to customers involve CSAT, advocacy, spending and a customer's lifetime value.

- Security: Many of these solutions include sound security models and world-scale infrastructure to keep your data secure.

About the Author: Chris Dearner

Chris Dearner is currently the Grooper product manager for BIS, an Oklahoma software company specializing in data extraction and integration. Chris is concurrently a PhD candidate at the University of California, Irvine, writing a dissertation on affordances and embodied action in Renaissance drama. His other work includes digital interactive art, with work currently on exhibit in Tulsa, Oklahoma, and past work featured in Oklahoma City, Oklahoma and Bilbao, Spain.