With today's technology, it's all about speed - taking advantage of modern architecture. But I can remember when OCR software was on a full-length computer board.

Not just a chip, but a full-length board - it wasn’t until a Pentium 90 that OCR got fast enough to run on a “modern” PC.

Back in The Day When Optical Character Recognition Software Left a Lot to Be Desired

Back then, you OCR’d the whole page and performed fuzzy searches to find your data. Or you grabbed a subset of data for archival purposes.

But you never trusted the OCR data unless you could validate it against a database. There just weren’t very many sources available to validate against.

You also only captured what you needed because most systems charged extra for full-text OCR.

Not Sure How Optical Character Recognition Software Works? No Problem

Let’s back up a bit though and explore what is optical character recognition software.

![]() At it's core, it's is a machine learning algorithm. It tries to match letters and words from pixels. Initially, only from black and white pixels - not a lot of data to choose from!

At it's core, it's is a machine learning algorithm. It tries to match letters and words from pixels. Initially, only from black and white pixels - not a lot of data to choose from!

We’ve been doing this so long, we’ve figured out some other things about OCR as well. Machine learning algorithms do better with less data.

So we patented a process that takes advantage of that premise:

- OCR the document

- Remove the characters that the machine was confident about

- OCR the remaining characters again

We actually get better results with this process. Segment the document into smaller chunks and OCR works better. The same OCR engine will yield different results with these methods. This is what 35 years of experience gets you.

Interestingly enough, the OCR engines themselves haven’t changed a whole lot. The same documents still yield the same results. You hear “95% accurate” and higher all the time.

Interestingly enough, the OCR engines themselves haven’t changed a whole lot. The same documents still yield the same results. You hear “95% accurate” and higher all the time.

So the results are the results. The problem with that 5% error rate is the cost of fixing errors...

DID YOU KNOW? There’s a data entry theory around the cost of errors:

Basically, an OCR error costs 10 times more to fix in-process, and 100 times more if it’s not discovered until after the fact!

So when a 5% error rate translates to 50% of the data entry labor – that’s significant. Anyone using a system that’s operating on manual data entry standard error rates (somewhere between 1 and 5%) is spending up to half of their labor fixing errors.

The alternative? Double-blind keying, which DOUBLES the data entry effort.

So What Change Has Drastically Reduced Optical Character Recognition Software's Error Rate?

The difference is in the preparation of the document before it goes through the OCR process.

Most companies are using the same few algorithms (some even use open source) for image cleanup. Open source is great for keeping costs down, but not necessarily the best in every domain, and this is especially true in the imaging/OCR domain.

Color + Algorithms

So we took a cue from one of the last real innovations in the capture industry:

We scan in color in order to run better algorithms and get cleaner document images that result in much better OCR. We wrote our own library of over 70 image cleanup algorithms.

Our most recent algorithms are designed for preparing microfilm. We took the work and research around computer vision and applied it to our industry domain.

But That's Not All. We Also Created a System to Catch Any Remaining Errors

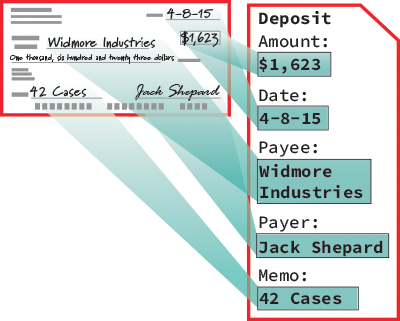

We’ve gone farther still. When you get the results from optical character recognition software, and a few of the results are bad, what do you do now?

In every other system I’ve worked with, when you get an OCR error — say a 5 instead of an S, or a high confidence with a bad character — you’re stuck with the OCR error.

Not with us. We’ve built a system that understands the common OCR errors and allows you to tune them for your project as needed. The results are great data from really poor OCR’d data.

I’m oversimplifying, but you get the point. We’ve figured out how to compensate for common OCR errors using machine learning and natural language processing (NLP).

The New, Innovative Ways that Optical Character Recognition Software is Now Being Used

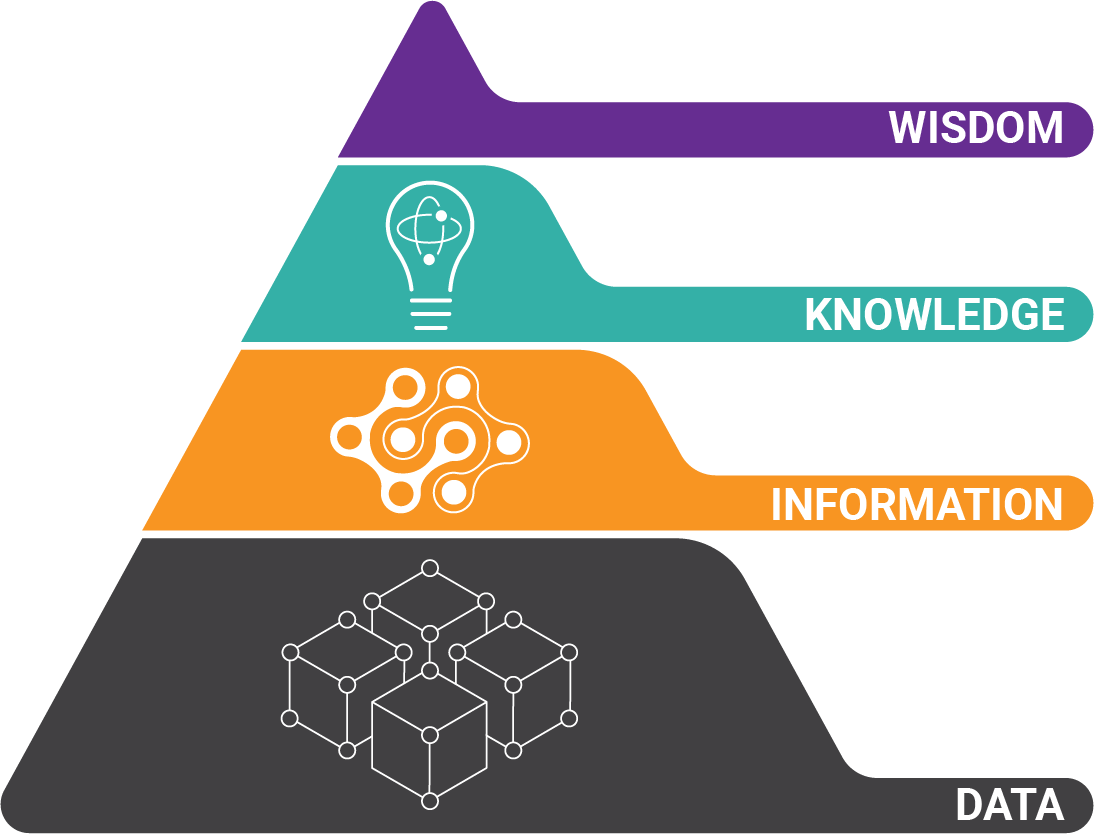

Using a layered AI approach, we’ve figured out how to use this in the document domain, rather than bolting on someone else’s library.

Using a layered AI approach, we’ve figured out how to use this in the document domain, rather than bolting on someone else’s library.

The result being, OCR’d data is just text. And guess what?

So are full-text PDFs, so are emails, so are a lot of documents that companies get. We’ve spent a lot of engineering time on being able to normalize text data.

Just because I get a full-text PDF doesn’t mean that data is easily extracted. It doesn’t mean that data will fit into my target system. We've fix that. It’s extract transform load (ETL) for documents.

The Final Frontier for Optical Character Recognition Software: Handwriting Recognition

Before 2020, I would strongly caution someone from trying to do anything except the very basic structured extraction for handprint.

Before 2020, I would strongly caution someone from trying to do anything except the very basic structured extraction for handprint.

However, between the preparation we can do, and layering recognition engines, we are now able to get incredible results from handwritten data.

And I’m still very skeptical and cautious. But we’ve sold systems this year that successfully recognized unstructured handprint data.

I wouldn’t believe it, if I hadn’t been part of it myself.

About the Author: Tim McMullin

Tim McMullin brings extensive sales and solution design experience to help customers and partners successfully meet their business objectives.

With more than 25 years of technical and sales expertise in the enterprise document and data capture space, Tim is responsible for managing Grooper sales at BIS. His leadership focuses on the expansion of all things Grooper, especially the channel and traditionally underserved verticals.