What is Intelligent Character Recognition?

Intelligent Character Recognition (ICR) is an advanced form of Optical Character Recognition (OCR) that is used to recognize handwritten text and convert it into computer-readable digital text.

ICR uses algorithms and the latest AI to recognize a variety of different handwriting styles or fonts and improve character (text) recognition and accuracy on paper-based documents.

Once these documents are scanned, ICR is performed to recognize text, and the extracted data is stored digitally in a database or ECM system. The data can leveraged in business workflows, integrated into reports, and can be easily found through searching the ECM system.

OCR technology is significantly improved by ICR's ability to process different handwriting styles, facilitating data extraction from both structured and unstructured text documents.

With each encountered handwriting style, ICR uses artificial neural networks to improve its accuracy by incorporating any fresh, new data to expand and upgrade its recognition database.

ICR is a valuable tool for industries or businesses that work daily with large volumes of forms, applications, letters, and other documents with handwriting. Industries that use Grooper's ICR today include: healthcare, finance / banking, insurance, law, government, and higher education.

ICR is a valuable tool for industries or businesses that work daily with large volumes of forms, applications, letters, and other documents with handwriting. Industries that use Grooper's ICR today include: healthcare, finance / banking, insurance, law, government, and higher education.

Many of our clients depend on accurate documentation for managing consumer records, so capturing data with as close to 100% accuracy as possible is of utmost importance. This is where ICR plays a vital role. It is a simple but robust capture tool for reducing errors while also saving human resources like time and effort.

Table of Contents:

- ICR vs OCR - What's the Difference?

- What are the Benefits of ICR?

- How Does ICR Work?

- What is Intelligent Word Recognition?

- How Does ICR Recognize Proper Nouns and Other Important Words?

Get our Free AI + ICR Case Study!

A leading insurance company, struggling with manual data entry from handwritten contracts, transformed their operations using AI and ICR. You will learn:

A leading insurance company, struggling with manual data entry from handwritten contracts, transformed their operations using AI and ICR. You will learn:

- How many millions of dollars they saved

- How many thousands of hours of painstaking manual work were eliminated

- How much time they are saving their customers

Discover how transformative AI and intelligent character recognition technology are in real-world business applications.

What are the Benefits of Intelligent Character Recognition?

There are many benefits of using intelligent character recognition. Among those are:

- ICR makes handwriting OCR software more accurate as it recognizes a variety of new handwriting styles and fonts.

You have more important data to make decisions from. If there isn't an ICR component in the software, all handwritten data on documents and forms would not be captured and would not make it into a content management system.

You have more important data to make decisions from. If there isn't an ICR component in the software, all handwritten data on documents and forms would not be captured and would not make it into a content management system.

- This could make a big difference to accounts payable departments as AP documents have important handwritten information on them.

- Reduced manual data entry work and fewer data errors. Any improvement or addition to OCR boosts text recognition and data accuracy. This means much less data entry work needed, and none of the errors that come with manual data entry. At the end of the day, this saves any business time and money.

- Improved data security. Handwritten information can often contain Personally Identifiable Information (PII) in the form of names, social security numbers, or drivers license numbers.

- If your software doesn't have ICR, your enterprise may unknowingly circulate or store documents with PII, running the risk of privacy incidents. And privacy incidents can result in loss of employment, and civil or criminal penalties.

- ICR uses artificial intelligence and neural networks that help out a system to learn by itself with experience. This means that every time ICR experiences new data, that improves and upgrades its learning through artificial neuron networks, which improve the recognition database.

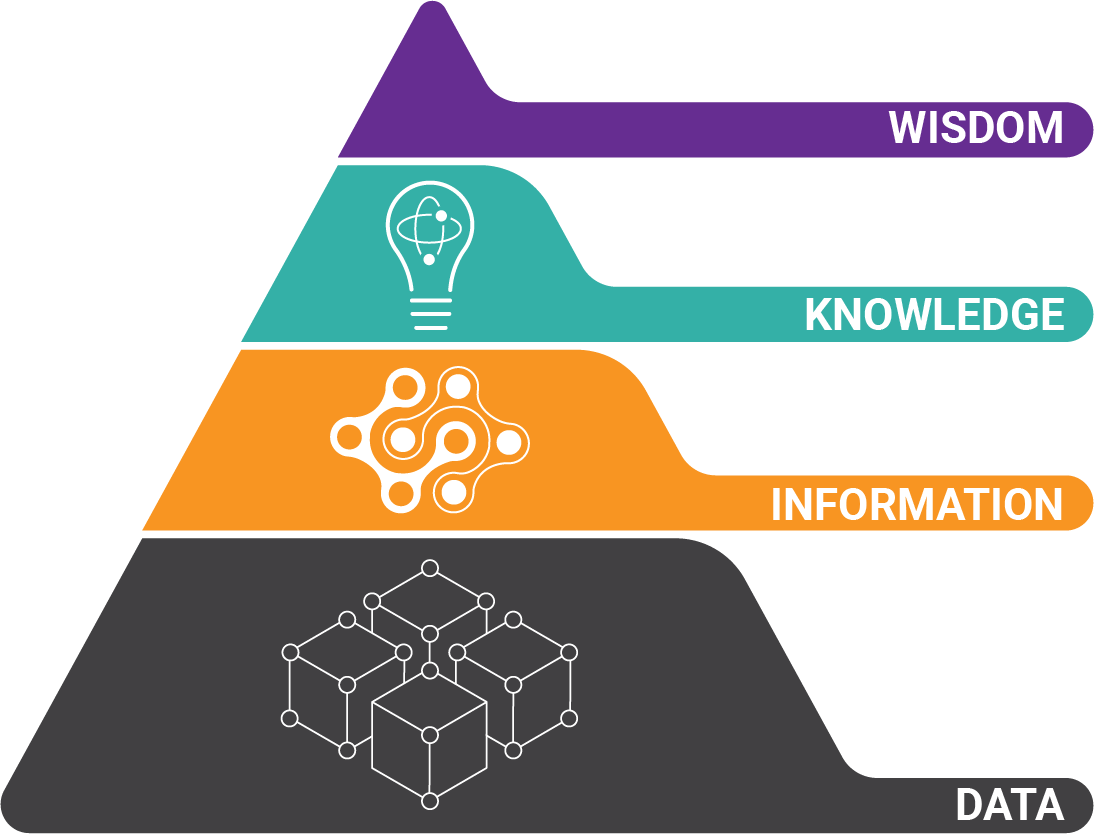

How Optical Character Recognition Works Without ICR

Traditional OCR uses a “matrix matching” algorithm to identify characters using pattern recognition. The character on the document’s image may look like this:

It is compared to a stored example that looks like this:

By comparing a matrix of pixels between the character on the image and the stored example, the software determines the character is a “G”.

This seems like a good approach – but beware of the pitfalls. Because it is comparing text to stored examples pixel by pixel, the text must be very similar.

Even if there are hundreds of examples stored for a single character, problems often arise when matching text on poor quality images or using uncommon fonts.

ICR vs. OCR - What's the Difference?

We have talked about the two technologies, from a technical perspective. But what are actual reasons why businesses use OCR vs ICR, and vice versa?

ICR

Intelligent character recognition (ICR) is a specialized form of OCR designed for identifying text in images, including handwritten documents. Unlike traditional OCR, ICR doesn't rely on pre-defined templates, making it highly adaptable to various document layouts.

Intelligent character recognition (ICR) is a specialized form of OCR designed for identifying text in images, including handwritten documents. Unlike traditional OCR, ICR doesn't rely on pre-defined templates, making it highly adaptable to various document layouts.

Through training, ICR can be adjusted to recognize new handwriting styles and document changes. It's a more detailed and involved technology compared to standard OCR, as it can even flag potential inconsistencies or mathematical errors, requiring human review for verification.

ICR is a sub-set of OCR technology that can use the latest AI to recognize complex handwritten fonts and styles. By using modern AI tools like Microsoft Azure, ICR can adapt to new handwriting styles and convert handwritten documents into editable and searchable files.

OCR

- OCR recognizes text in documents.

- In most document capture software (especially legacy software), templates have to be manually created to enable OCR.

- Grooper does not require templates to be created to enable high quality OCR.

- OCR is great for companies who have a very structured and unchanging format for documents.

- OCR is a vast technology.

- OCR primarily focuses on printed documents.

- It has a limited ability to read handwriting and complicated fonts.

- It does not incorporate details in the documents.

- Manual intervention and review are required for OCR-based software.

How Does ICR Work?

Essentially, ICR systems scan documents and interpret all handwritten text and fonts, compared to handwriting databases. The software may ask the user to verify results.

To get more in-depth, here are the exact steps in the ICR process:

Image Capture

The ICR process begins when documents are loaded into an OCR / ICR scanner, camera or other imaging device. Any text (handwritten or printed) is transformed to paper to image form, either in JPG, PDF, PNG, or other format.

Pre-ICR Processing

Just before ICR can begin, the document images have to be cleaned up. Hopefully, all noise or distortion is removed, lighting is optimized, and other non-text artifacts (like hole punches, staples, or lines) are removed.

The more that a document image can be improved, and the more that non-text artifacts can be removed, ICR data recognition accuracy improves greatly.

Segmentation

In this phase, ICR divides a document image into individual characters or text strings. This makes the next phase, feature extraction, easier to execute.

Combining ICR and OCR

Intelligent character recognition engines work by combining both traditional and feature-based OCR techniques. The results of both algorithms are combined to produce the best matching result. Each character is given a “confidence score,” which corresponds to how closely the character pixels or features match or a combination of the two.

Even with this blended approach the typical text to OCR villains are on the attack: poor document quality, multiple font types, and different font sizes.

What is this character? Is it an "O", "0", "C" or “G”? Is it even a character or letter at all? Very hard to tell, especially for a machine.

So intelligent character recognition must make a decision and it may not make sense within the context of the word or sentence. If a human can’t read the character, then OCR will certainly have trouble.

Feature Extraction

From this point, the component features of each characters are identified, rather than by comparing pixels to known examples.

So instead of using pixels to recognize this character...

Feature matching is used. It's often easier for software to execute recognition through looking at features (like a character's size, curve, and shape) that make up a character instead of random pixels. The result is that the margin of error is less. These orange lines are a stored glyph for the letter 'G'.

So ICR software compares the shapes in it's stored glyphs to the character that was written or typed.

Features include lines, line intersections, and closed loops. ICR combines this feature analysis with traditional pixel-based processing to achieve high accuracy character recognition.

For example, an “O” is a closed loop, but a “C” is an open loop. These features are compared to vector-like representations of a character, rather than pixel-based representations. Because intelligent character recognition looks at features instead of pixels, it works well on multiple fonts and with handwritten characters.

Now you know why intelligent character recognition is an improvement over standard OCR.

Pattern Recognition

Most intelligent character recognition software also use a machine learning algorithms like neural networks. These are like a recognition database that classify and store new handwriting patterns, character features, and styles.

In this step, ICR systems also use context analysis to examine words or sentences. This helps the software to compare the text to a dictionary and thus improve handwriting recognition accuracy.

Post-Processing

Without additional context, character recognition errors make sense. Even if the character isn’t discernable, a human knows “ballboy” is an indie band from Scotland and “bollboy” is just gibberish:

The most common post-processing done by OCR engines is basic spell correction. Often, errors from poor recognition result in small spelling mistakes. All commercial OCR engines compare results with a lexicon of common words and attempt to make logical replacements.

Data Extraction and Integration into Business Systems

Finally, data gets extracted from documents and structured based on rules and key-value pairs. The data is then mathematically validated (where applicable) and intelligently checked for errors.

When errors or anomalies are found, the ICR system, like Grooper, flags them and sends them to a queue for a human operator to review. The documents are processed and extracted document data is automatically entered into downstream business systems (such as ECM or ERP), databases, or accounting systems.

What is Intelligent Word Recognition?

Intelligent word recognition (IWR) recognizes and extracts hand-written and printed character data, but also cursive handwriting.

Intelligent word recognition (IWR) recognizes and extracts hand-written and printed character data, but also cursive handwriting.

ICR software recognizes on the character level, whereas IWR looks at full words and phrases. It also has the capability of capturing unstructured data and is a different evolution of hand-written ICR.

This is not to say that intelligent word recognition is going to or should replace traditional OCR or ICR systems, as IWR is optimized for processing real-world documents that have a free form and hard-to-recognize data fields and as such are not suitable for ICR. (See example above).

So the best application of IWR is on documents where OCR or ICR would have a very tough time (cursive handwriting); but also with enough instances where the other option (manual entry) would involve substantial manual work.

How Does ICR Recognize Proper Nouns and Other Important Words?

If you need to recognize important words that are not in a lexicon of common words, this is where intelligent character recognition really shines.

If you need to recognize important words that are not in a lexicon of common words, this is where intelligent character recognition really shines.

There are three easy ways to solve this:

- The easiest way is to import custom lexicons for words related to your organization or industry.

You may have medical terms or even customer / company information that you need to match against. Using a custom lexicon will provide even better chances at finding the right match. - Another method for improving OCR character accuracy is something called “fuzzy matching.” Fuzzy matching is a method of providing weighted thresholds to characters and allowing the software to substitute characters based on likely good replacements.

For example, the software would be allowed to try an “o” when a “0” provides a bad result. Same for an “l” instead of a “1”, etc. - Out-of-the-box OCR like Transym, Tesseract, Azure, ABBYY intelligent character recognition, and Prime all provide more accurate ICR results when combined with an advanced character recognition solution, like Grooper.

Applications of Intelligent Character Recognition

Intelligent character recognition was created for the purpose of real-world data capture off physical documents and intelligently converting it into usable electronic forms. ICR is being used every day by these industries:

Healthcare

One great example is shown in this case study on medical document data extraction. In this instance, ICR is being used to process insurance forms (that includes handwritten medical records) weeks faster and get patients their needed medical equipment.

More generally speaking, character recognition software is being used in the healthcare industry to digitize huge amounts of documents with patient data, such as:

- Medical records

- Prescriptions

- Patient intake forms

- Patient medical history

The benefits are significant time savings, cost savings, and improved patient care.

Finance

Financial institutions such as banks and credit unions use ICR to streamline workflows through Optical Mark Recognition to get information off loan applications, forms, surveys and even checks.

Insurance

For starters, our case study featured at the top of this blog specifically shows how a leading insurance company uses ICR and AI to automate contract processing. This insurance company uses Grooper ICR to capture any handwriting on forms that come in from their customers.

For starters, our case study featured at the top of this blog specifically shows how a leading insurance company uses ICR and AI to automate contract processing. This insurance company uses Grooper ICR to capture any handwriting on forms that come in from their customers.

They formerly were using human operators to tackle the painstaking job of manually entering the same information. Their customers (funeral homes) no longer have to spend as much time on paperwork, and how have more time to comfort grieving families.

Generally speaking, companies use ICR software to speed up insurance claims processing and policyholder information.

Legal

Law firms and legal departments use ICR solutions to extract handwritten information from forms contracts, case files, or handwritten forms.

The technology is also being used to automate the legal discovery phase, a process that was traditionally performed with painstaking manual work. It is usually performed by attorneys or highly paid legal assistants, so automation through ICR saves significant costs.

Retail

Many retail businesses use our ICR and OCR software, Grooper, to process handwritten documents like paper order forms, invoices, and many other documents with customer information.

Many retail businesses use our ICR and OCR software, Grooper, to process handwritten documents like paper order forms, invoices, and many other documents with customer information.

E-commerce and online-based businesses use ICR to collect electronic signatures and log them into databases to assist with know-your-customer documentation.

Accounts Payable

Every single industry deals with massive amounts of accounts payable data, in the form of invoices, receipts, bills of lading, etc.

ICR technology can be a vital component to achieving the highest rates of recognition accuracy, which drastically cuts down on human time needed to key the data into line-of-business systems.

About the Author: Brad Blood

Senior Marketing Specialist at BIS