It’s easy for a big data project to fail. A first-time project is like fragile seeds we hope to sprout and grow indoors before Spring arrives. The environment must be perfect, and if it’s your first time…

You need expert guidance. Everything must be just right – and there’s no one-size-fits-all solution. But one thing is for sure – there’s a right time. With gardening, timing is everything, and with data projects the stars must be aligned. Or so it seems.

Big data projects are cunningly difficult. We know – we’ve seen massive successes, massive failures, and we’ve even been able to head off potential disasters by not engaging in the first place. Here’s the difficulty that’s hard to comprehend at first; most organizations’ data is spread all over the place with nothing unifying it, and no single platform for storage or retrieval.

Doesn’t seem so bad, does it? Let’s just keep that “easy button” kind of feeling and move on to outcomes.

Doesn’t seem so bad, does it? Let’s just keep that “easy button” kind of feeling and move on to outcomes.

Outcomes and Expectations for Your Big Data Projects

When we ask what’s most important, it’s easy to talk about outcomes. For our garden, we want luscious veggies.

For our big data projects we’re focused on:

- Improving workflows

- Reducing manual data entry

- Combining data into dashboards

- Feeding RPA

- Or simply creating a way to universally search and retrieve information.

We all pretty much know what we want.

Ultimately, how do we get there? By carefully balancing the expectations of cost, speed, and accuracy. Rarely does a project get the best of all three. But even before you can make this decision, that pesky data gets in the way.

How to Succeed with the Most Cost-Effective, Fast, and Accurate Big Data Projects

People and vendors who haven’t been in the trenches (or who want to avoid the painful truth) of an enterprise data project might simply give you a 7 step data project plan. That’s great. But it can’t possibly prepare you for the journey ahead.

We all like to talk about the sexy, shiny tools:

- RPA

- Machine learning

- Predictive clustering algorithms

- Deep learning neural nets, etc.,

But the reality is that most organizations are struggling to get to the point where these tools maximize business value. Everyone loves a good experiment, but if you’re after real business outcomes and bottom-line results, prepare to pay the price.

The most challenging step in any big data project is dealing with unstructured data. You’re probably sick of hearing about unstructured data. It’s been preached about for years, but the reality often doesn’t set in until you’re in the middle of it.

The most challenging step in any big data project is dealing with unstructured data. You’re probably sick of hearing about unstructured data. It’s been preached about for years, but the reality often doesn’t set in until you’re in the middle of it.

Real-World Example of the Unstructured Data Problem in Big Data Projects:

Let’s say you sell a product that helps consumers make a purchase based on some kind of real-world data. And let’s say a majority of that data is created outside of your organization. And it’s great! You have access to hundreds or thousands of different data points from all over the world.

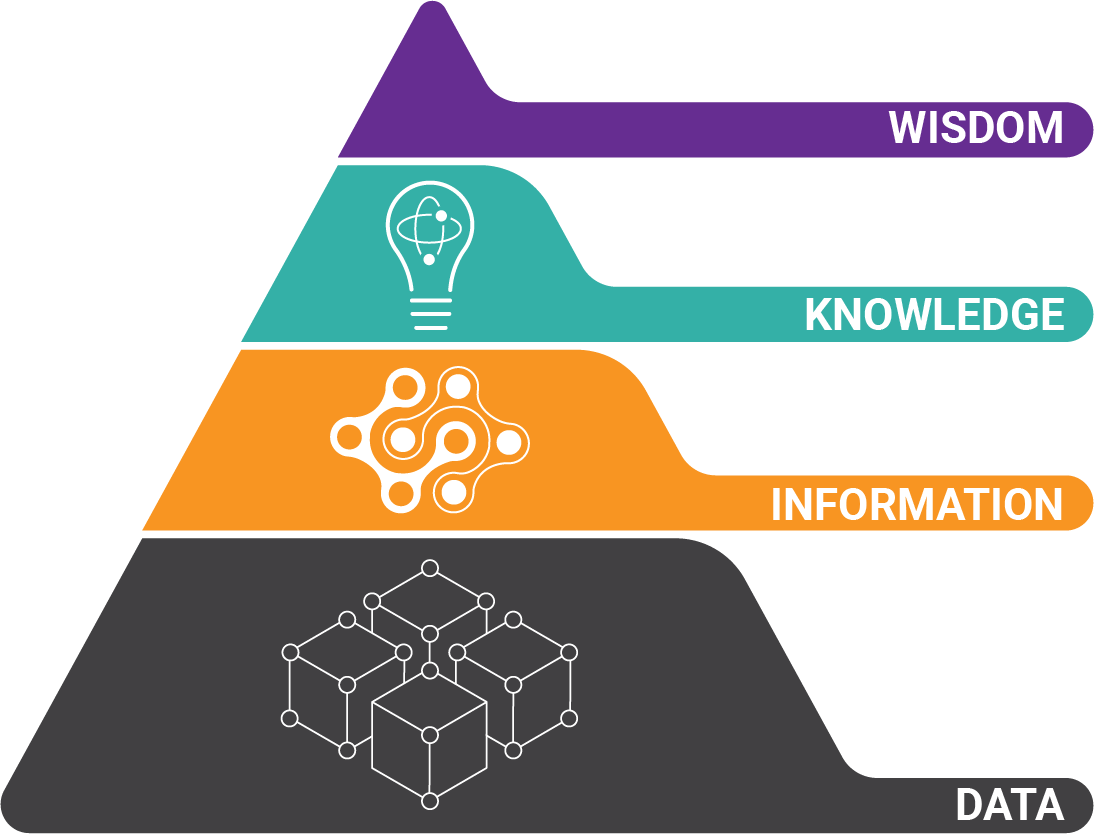

All you need to do is:

- Get the data

- Clean it

- Enrich it

- Gather insights

- Infuse your product with all the relevant information for your customer to make the best purchase.

Guess which part of that is the most difficult? Getting the data.

Just getting the data is the most difficult step in a big data project. Why? Because you can’t control the source. If you have hundreds of different sources all reporting similar data elements, you have the number of data elements times the number of sources as your difficulty rating. 200 sources multiplied by 500 data points gives you an easy 100,000 variables to deal with.

Just getting the data is the most difficult step in a big data project. Why? Because you can’t control the source. If you have hundreds of different sources all reporting similar data elements, you have the number of data elements times the number of sources as your difficulty rating. 200 sources multiplied by 500 data points gives you an easy 100,000 variables to deal with.

And to make matters worse, if a significant number of source data are in PDF document format (our real-world experience shows this is the case), each document must be machine readable to even attempt “getting” the data.

Here’s where a data science platform is a requirement for these kind of projects. You can’t ignore the pesky unstructured data because it represents such a large portion of available data. Here’s where having a third-party expert makes the difference in big data project success. Here’s our advice…

This is the most critical step, and the one that will make or break your big data project...

Before you get too far into planning what you’re going to do with your data, make a plan for exactly how you’re going to get it.

Here's How to Prepare for the Enormity of Data Extraction

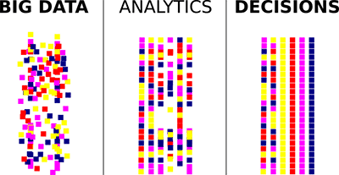

Data extraction is the most difficult and hard to predict step in any big data project. Success hinges on it. Failing to fully understand the process of getting information into a meaningful structure will derail your project and destroy predicted return on investment. On the flipside, creating structure from unstructured data enables RPA and fuels downstream analytics.

Locate all sources of available data and look at all representative samples. Make a plan for how you will extract the information.

Locate all sources of available data and look at all representative samples. Make a plan for how you will extract the information.- Balancing cost, speed, and accuracy, what is the best approach? You have two options; manual data entry and intelligent document processing. Do not assume that this easy.

- But what about PDF documents? Digital documents are often not embedded with data, or have corrupt metadata. Machine reading these pages will require an extensive toolset of image processing, optical character recognition, and error handling (both built-in and human-review).

- And if you are in the business of selling data, enriching PDF documents with accurate and structured data makes your documents even more valuable…

Big Data Projects Must Have a Master Data Model

Once a plan for cheap, fast, and accurate data extraction has been determined, you must create a master data model to bring the data into your database. Because the same information will be represented in different formats and with different document labels, a subject matter expert is critical to help define an understanding of what the data is and how it is to be interpreted.

Data quality tools (hopefully part of your data science workbench) will be necessary to accurately ingest, standardize, and label data. Without accurately labeled data, your big data project will have nothing reliable to process.

Clean Data is Easy to Enrich

When your big data is nicely structured, the process of enriching it with other data sources makes it even more powerful and useful. Because you can’t possibly know all the questions you’ll end up wanting to ask, prepare for the future ahead of time.

When your big data is nicely structured, the process of enriching it with other data sources makes it even more powerful and useful. Because you can’t possibly know all the questions you’ll end up wanting to ask, prepare for the future ahead of time.

The more data you label, the better. When you deploy machine learning or deep learning algorithms to make sense of your data, reliability is entirely dependent on the accuracy and transparency of your data.

Clean data is also easier to integrate with downstream applications and analytics tools. By paying the price to ensure accuracy before amassing data, you’ll get more value than you can anticipate at the beginning of the project.

About the Author: Brad Blood

Senior Marketing Specialist at BIS