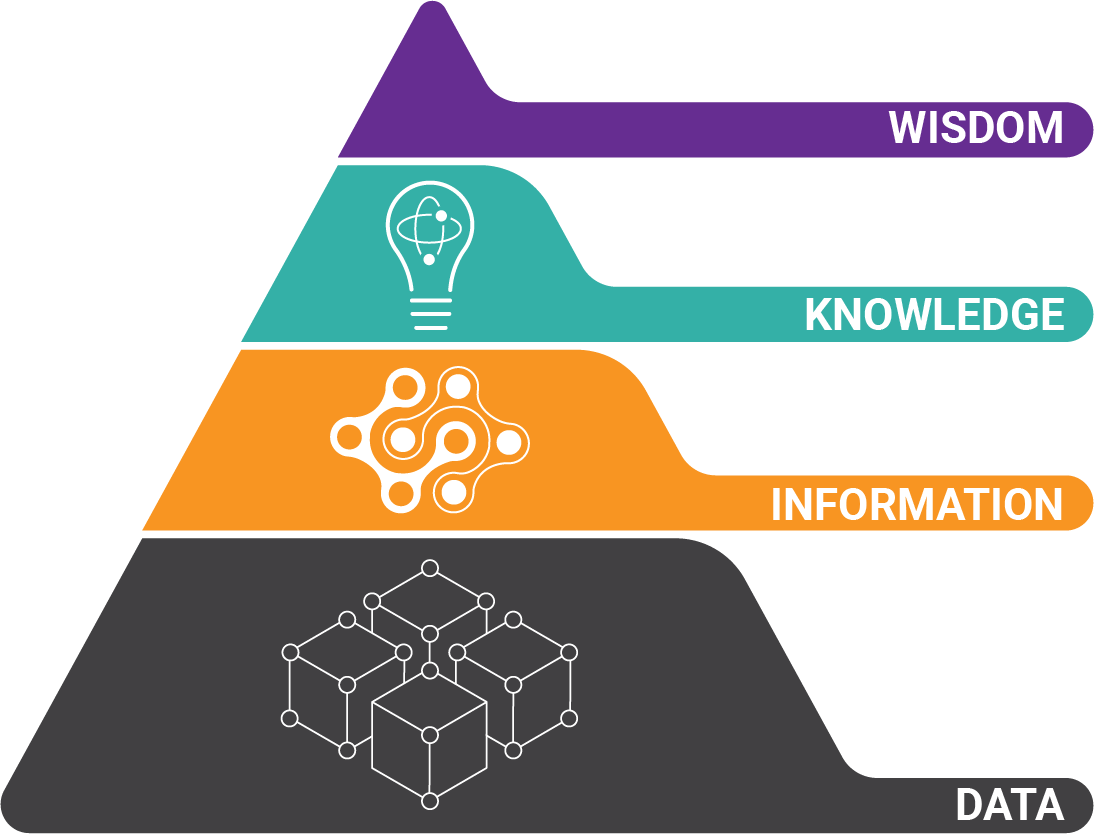

Today's world is a sea of information. What's the relationship between the way humans navigate it all and the way we build A.I. to do the same thing?

This article explores the way human decisions shape knowledge and beliefs, and why we believe things that aren't true.

First, Think About How Babies Learn

If we learn how human beliefs are formed, it is that understanding that must be used to build better, and more ethical A.I. Otherwise, we risk building in dangerous bias from the get-go.

So we know that babies go from knowing relatively nothing to knowing quite a lot. And here's where our choices come into play. For every subject a child focuses on, there's huge opportunity cost.

So we know that babies go from knowing relatively nothing to knowing quite a lot. And here's where our choices come into play. For every subject a child focuses on, there's huge opportunity cost.

With all the information available, nobody can know it all so we have to choose what we learn and what we ignore.

For all the knowledge we gain, we ignore even more. As we devise ways of building machine understanding, will we duplicate this same level of ignorance?

Is Creating an Ideal Artificial Intelligence Even Possible?

Is it even possible to build neutral A.I.? The key is in understanding how humans learn. Without pausing to consider human psychology, the problem of building bias into our algorithms is imminent.

After you read the 5 points below, you'll have a greater appreciation for how difficult achieving neutral A.I. really is - and how easy it is to build corrupt AI.

Discover These 5 Ways That AI is Corrupted

At the end, you'll see why ethics is so important in A.I. and why neutrality in tech is extremely difficult to build.

1) Skewing Toward Beliefs Instead of Facts

The first concept of learning is that we continuously form beliefs. And for the sake of this article, let's define beliefs as probabilistic expectations. (Keep reading to understand why we might believe something that isn't true.)

But what do expectations have to do with what we know, or choose to know? It's all about something called surprisal value. If we're extremely surprised, or under-surprised by information, we tend to pass over it. This is just human nature. Probably something to do with reserving brain compute for more important tasks...

But what do expectations have to do with what we know, or choose to know? It's all about something called surprisal value. If we're extremely surprised, or under-surprised by information, we tend to pass over it. This is just human nature. Probably something to do with reserving brain compute for more important tasks...

Just because something doesn't meet our expectation of what we feel like it should be doesn't mean that it isn't worth considering.

Will we build machine algorithms that dedicate equal compute to ideas and facts regardless of potential importance?

2) Machine Training to Not Use Data From Multiple Sources

Secondly, our certainty in a belief diminishes interest. What you think you know determines your curiosity - not what you don't know!

Studies show that if you think you know the answer to something, and are presented with the answer (even if you are wrong), you're actually more likely to ignore it. This is the reason why we can get stuck with certain beliefs that clearly do not align with reality.

Will a machine with a programmed understanding be constructed to consider alternative truths?

Watch this parody to consider the importance of machine training!

3) Not Getting and Using Feedback

Third, certainty is driven by feedback, or lack thereof. If a learner is presented with questions, and randomly gets them correct, it makes feel certain they have an understanding they likely do not truly have.

And without feedback, the level of certainty will be driven by irrelevant factors.

Try this example. Ugly old woman, or beautiful princess?

If I'd only shown you one of the pictures, your answer would have been correct, but you might not have known the reality of the picture. How will we build algorithms that don't form permanent certainty based on initial training or feedback?

If I'd only shown you one of the pictures, your answer would have been correct, but you might not have known the reality of the picture. How will we build algorithms that don't form permanent certainty based on initial training or feedback?

4) Who is Performing the Coding - And What Are Their Biases?

The fourth way we come to know what we know is that less feedback may encourage overconfidence. If two people use the same word, in the same context, do they truly have the same concepts. Quid pro quo? :-)

The easiest concepts to consider are political. When two people talk about Ronald Reagan, or Karl Marx, their basic concepts could easily be drastically different. So, without a discussion (feedback), we might be too confident in what we believe we understand.

The way that concepts are programmed into machine understanding is a complicated and complex endeavor. Who gets to decide this? And how will we ensure unbiased, or multi-faceted information is used?

5) Making Decisions Quickly - With Only Little Data

The fifth element of knowledge acquisition is that we actually form beliefs fairly quickly. Think about the last time you performed a Google search to discover an answer.

Did you spend the time to look at multiple points of view, or did you rapidly draw a conclusion? Early evidence counts more than later evidence.

People obviously form beliefs based on the content of online search results. Decisions are made that affect their lives, children, health, and even their views on public policy.

Look up "Activated Charcoal" on YouTube. Watch a handful of videos. What do you think? Fairly confident that activated charcoal is useful for wellness?

The Future of AI is Murky

Will the machines we program tend to learn the way we do?

Or will we take a deep look into human psychology and build things differently?

This isn't an article on general A.I., or document AI but I hope this helps create an understanding of why ethics and A.I. is such an important topic.

This article was written from the content of Celeste Kidd's presentation at NeurIPS.

About the Author: Brad Blood

Senior Marketing Specialist at BIS