The Data Science Workbench is a Relatively New Idea

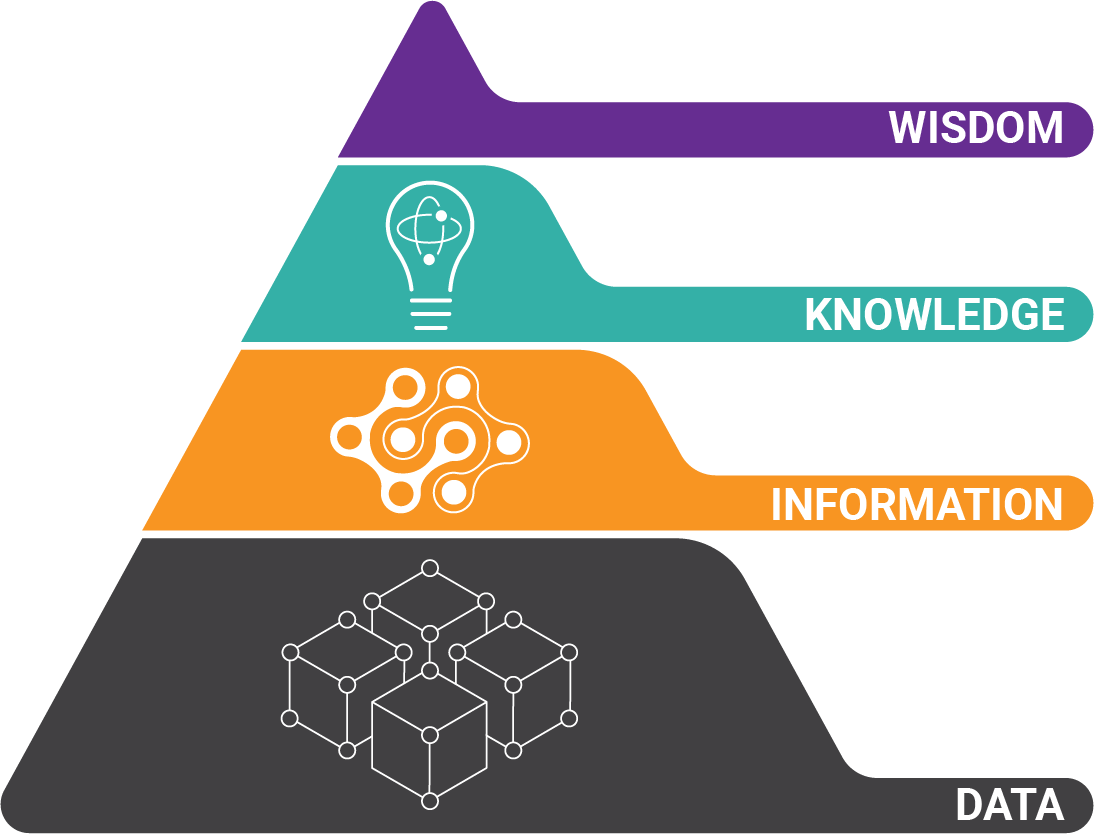

A data science workbench is an industry-agnostic, scalable, unified, and transparent platform that equips users to perform complex data-related tasks on a variety of information sources using multiple toolsets.

That’s a mouthful! While it may sound complicated, a data science workbench actually makes data science tasks easier to perform. Instead of manually moving data between tools, it provides an intelligent flow from the source of information all the way to downstream applications.

Technology is evolving along with the needs of the intelligent (digitally transforming) enterprise. While tools like R, S, and Python are a part of the typical data scientist’s toolkit, they only provide one facet of a data science platform.

A data science workbench enables technical users to quickly build classification and machine learning models, view results real-time, and make adjustments as needed for rapid insight.

Data Science Workbench for Document Processing

In order to meet the requirement of being industry-agnostic, a data science workbench for document processing offers tools needed to work with any document type or format. Because humans inherently understand the intent of a document, it’s easy for us to overlook the challenges associated with processing and extracting important information from everyday documents.

Take an invoice, for example. Even without any text on the page, the structure of the document tells us it’s likely to be an invoice. And once we add in the text, anyone can see the important information.

People accustomed to looking at legal documents quickly scan pages to find important dates, sections, and clauses.

In the case of industry-specific reports and forms, subject matter experts just as easily sift through information to find exactly what they’re looking for.

A data science workbench provides the tools necessary for a business user or subject matter expert to create an actionable machine-understanding of information from virtually any source, and integrate that data as needed.

How a Data Science Workbench Works

A data science workbench provides hard-coded tools that enable intelligent workflows for any data type. In the document processing example, the machine must be able to look at the layout and content of the document to make decisions about the information there.

Technologies like computer vision and machine learning are cornerstones of data science. They supercharge the use of traditional tools like optical character recognition and lexicons to identify, label, and extract highly accurate data.

In the case of non-document data like very large text files, the machine must make decisions about information based on the content of the data. This is where data pre-processing tools like porter stemming, tokenization, and natural language processing are used – all within the same platform.

Combining Machine Intelligence with Extraction

A data science platform wouldn’t be complete without the ability to do something with processed and labeled data. In the real world, data must be exchanged between multiple systems that have different data structures.

Identifying and labeling information is only half the battle. A data science workbench provides the tools necessary to integrate data within a master data model. Since integration works in multiple directions, data identified by the platform is cross-checked with external databases to fill in missing information, or to provide validation. With information flowing in and out of the data science workbench, a complete understanding of the data is formed before information is integrated to downstream applications.

About the Author: Brad Blood

Senior Marketing Specialist at BIS